Bot Detection & Edge Routing

Serve the right content to the right visitor — instantly.

DataJelly intelligently identifies search engine bots, AI crawlers, and human users at the edge, ensuring every visitor receives the correct version of your site for optimal SEO, AI visibility, and performance.

Test your site's bot visibility

See how search engine bots and AI crawlers experience your site today.

Find out in under 1 minute:

Test your visibility on social and AI platforms(No signup required)

What DataJelly Is

DataJelly is an automated server-side rendering and AI SEO platform that converts your JavaScript-heavy website into clean, fully-ingestible HTML for Google, ChatGPT, Perplexity, and all AI crawlers.

No rebuilds. No SSR migration. No code changes.

Why Bot Detection Matters

Modern search engines and AI systems rely on accurate crawling. But Single Page Applications often break because:

- Bots don't run JavaScript

- SPAs return empty shells or delayed metadata

- Route changes aren't detected

- AI crawlers misclassify content

- Dynamic rendering confuses indexing systems

If bots receive the wrong content, your site:

Bot detection is the foundation of all SEO and AI visibility. DataJelly ensures bots always get the correct version of your site.

How DataJelly's Bot Detection Works

DataJelly uses a multi-layer detection system built for 2026-era AI crawlers and search engines.

1. User-Agent Validation

We analyze user agents for Googlebot, Bingbot, ChatGPT-User, ClaudeBot, PerplexityBot, and dozens more.

2. Reverse DNS Verification

We validate the requesting IP against official crawler ranges (Google, OpenAI, Microsoft, Anthropic, etc.) to prevent spoofing.

3. AI Crawler Recognition

We identify all major LLM retrieval systems, including ChatGPT, Perplexity, Claude, Bing AI, Google AI Overviews, and enterprise RAG systems.

4. Behavior & Rate Profiling

We detect crawling patterns, not just headers — ensuring high accuracy even for new or evolving AI bots.

5. Real-Time Edge Decisioning

Routing decisions happen at the edge — no latency, no delays.

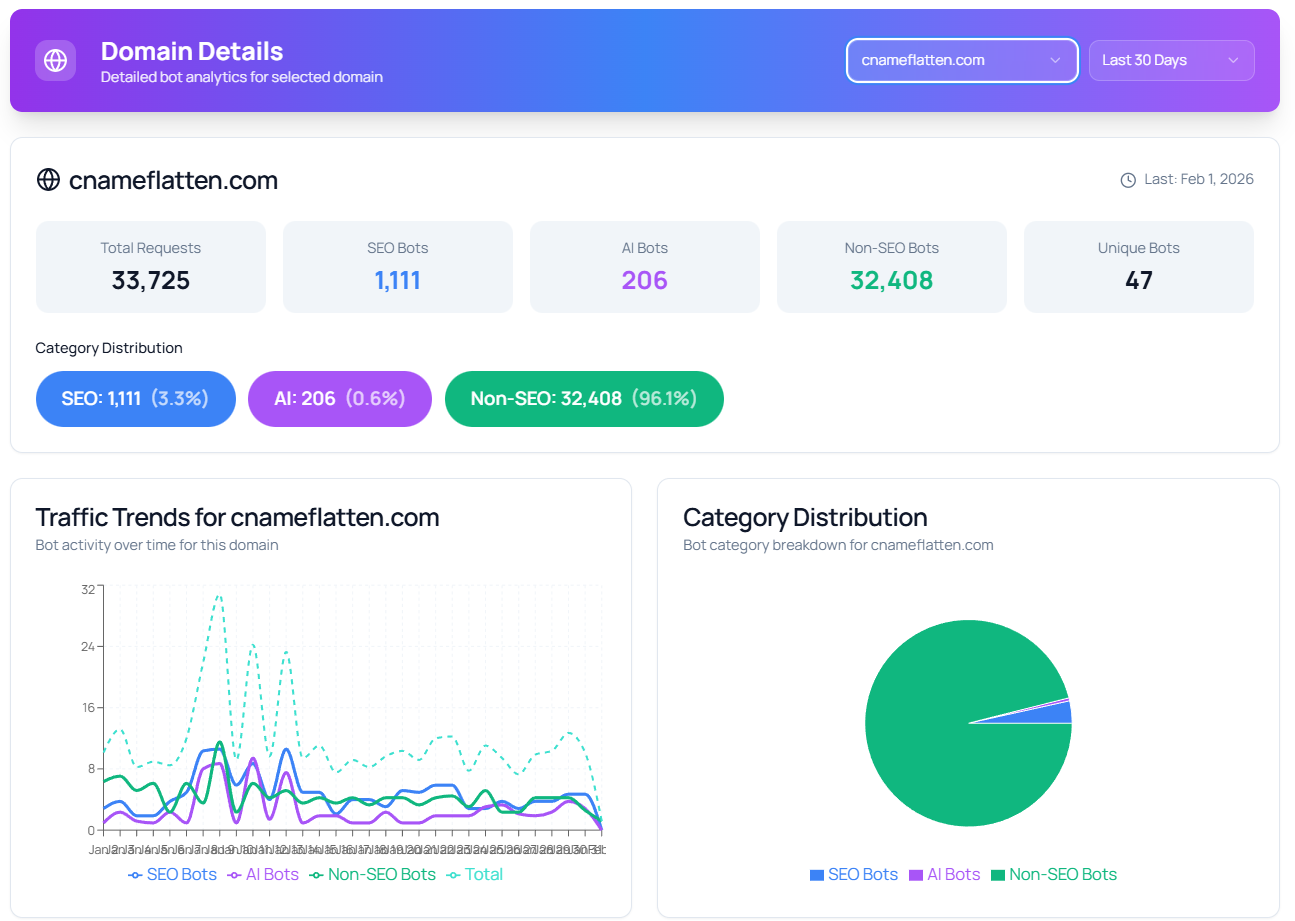

Real-time bot traffic analytics: SEO bots, AI crawlers, and non-SEO traffic categorized automatically. Learn more about each bot →

AI Crawlers We Identify

Smart Routing for Every Visitor

Once identified, DataJelly routes traffic to the correct experience:

If it's a bot → Serve AI-ready snapshots

Bots receive:

- Fully rendered HTML

- Clean metadata

- Structured data

- Stable content

- Canonical routing

If it's a human → Serve your SPA normally

Humans get:

- Fast client-side rendering

- Your full interactive app

- Zero SEO compromises

Your site behaves like an SSR app for bots — and like an SPA for users.

Why This Matters for AI SEO

AI engines require:

Bots must see your site the same way every time.

DataJelly ensures:

- AI crawlers never receive a blank SPA shell

- Google never misses your metadata

- Perplexity and ChatGPT can ingest your full page

- Edge routing always delivers the correct snapshot

This is the backbone of GEO (Generative Engine Optimization).

Key Features of Bot Detection & Routing

Guaranteed Bot Accuracy

Multi-step validation prevents spoofing and misclassification.

Zero Performance Cost

All decisions are made at the edge — no delays, no server load.

Instant Routing Logic

Humans and bots are instantly split into the correct path before your SPA loads.

AI Crawler Support

Full compatibility with ChatGPT Search, Google AI Overviews, Perplexity, Bing Copilot, Claude RAG systems.

Integrated with Snapshots

Detection fully ties into DataJelly's rendering and AI SEO pipeline.

Works with Any Framework

Lovable, V0, Bolt.dev, React, Vue, Angular, Vite, and more.

Example Routing Flow

Visitor arrives at your domain

DataJelly analyzes user-agent + IP + behavior

Detection layer classifies the visitor as Human or Bot

If Bot → Serve snapshot (SSR-like HTML)

If Human → Serve SPA

AI crawlers index your content correctly

Users see your fast, interactive app

DataJelly vs DIY Bot Detection

| Capability | DIY / Homegrown | DataJelly |

|---|---|---|

| Detect AI crawlers | No | Yes |

| Detect Google / Bing reliably | Sometimes | Yes |

| IP verification | Difficult | Automatic |

| Spoofing protection | Weak | Strong |

| Edge routing | No | Yes |

| Integrated snapshots | No | Yes |

| GEO alignment | No | Full |

DIY bot detection fails frequently — DataJelly is accurate, robust, and automated.

Who This Is For

Frequently Asked Questions

1. What is bot detection?

Bot detection is the process of identifying whether a visitor to your website is a human user or an automated bot (like a search engine crawler or AI agent). This allows you to serve optimized content to each visitor type for better SEO and user experience.

2. Why do SPAs need bot detection?

Single Page Applications rely on JavaScript to render content. Search engine bots and AI crawlers often don't execute JavaScript properly, seeing only empty shells. Bot detection allows you to serve pre-rendered HTML to bots while humans get your fast, interactive SPA.

3. How does DataJelly detect bots?

DataJelly uses a multi-layer detection system including user-agent validation, reverse DNS verification against official crawler IP ranges, AI crawler pattern recognition, behavior profiling, and real-time edge decisioning.

4. Does bot detection slow down my site?

No. DataJelly's bot detection happens at the edge with zero latency. Routing decisions are made instantly before your SPA even loads, so there's no performance impact for either bots or human users.

5. Can bots spoof their identity to bypass detection?

DataJelly prevents spoofing through reverse DNS verification, validating that requesting IPs actually belong to the claimed crawler (Google, OpenAI, Microsoft, Anthropic, etc.). Simple user-agent spoofing is detected and blocked.

6. Which AI crawlers does DataJelly support?

DataJelly detects all major AI crawlers including ChatGPT-User, PerplexityBot, ClaudeBot, Bing AI crawlers, Google AI Overview systems, and common enterprise RAG systems. We continuously update our detection for new crawlers.

7. What happens after a bot is detected?

Once a bot is detected, DataJelly routes it to receive a pre-rendered HTML snapshot with fully rendered content, clean metadata, and structured data. Humans continue to receive your normal interactive SPA.

8. Is bot detection the same as bot blocking?

No. DataJelly's bot detection is about serving optimized content to bots, not blocking them. We want search engines and AI crawlers to see and index your content — we just serve them a version they can actually read.

9. How is this different from DIY bot detection?

DIY solutions typically rely only on user-agent strings, which are easily spoofed and don't detect AI crawlers. DataJelly provides IP verification, behavior analysis, AI crawler recognition, edge routing, and integrated snapshot delivery — all automatically.

10. Does DataJelly work with my existing framework?

Yes. DataJelly works with any JavaScript framework including React, Vue, Angular, Vite, and platforms like Lovable, V0, and Bolt.dev. No code changes or framework migration required.

Related Guides

AI SEO Platform

Make your site visible to AI search engines.

How Snapshots Work

Understand the DataJelly snapshot pipeline.

AI SEO Testing Guide

Master GEO and LLM web standards.

SPA SEO Guide

Best practices for Single Page Apps.

JavaScript SEO Guide

Optimize JavaScript-powered websites.

SSR Guide

Server-side rendering approaches.

Start Routing Bots the Right Way

Bot detection is the first step in SEO and AI discovery. Let DataJelly handle it automatically.